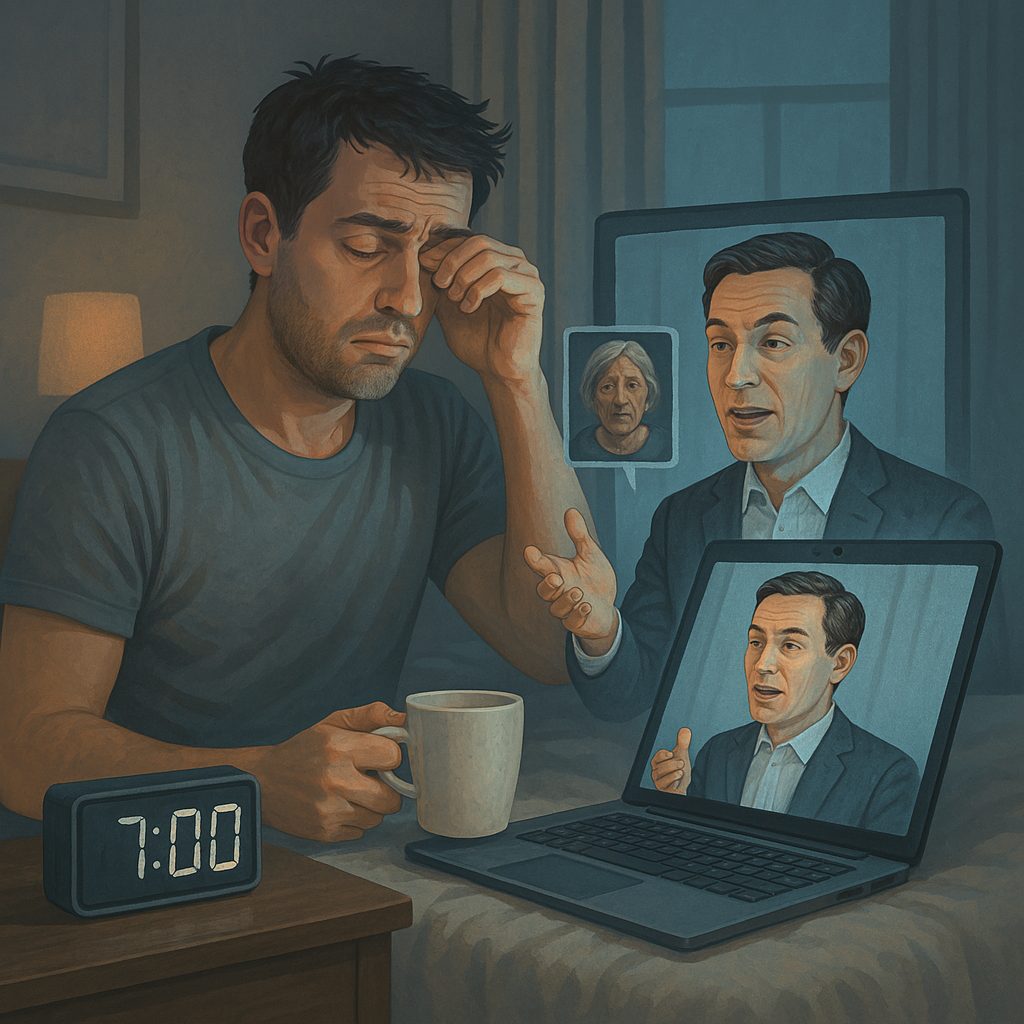

Imagine this: It’s 7 AM, and as you wipe the sleep from your eyes, your digital twin has been busy for hours. It has responded to 32 emails in your tone, participated in a Tokyo strategy meeting utilizing your facial expressions and speaking style, and even soothed an upset client—all prior to your first sip of coffee. This is not a far-off future situation. Imagine, corporate leaders creating AI copies of themselves to manage everyday tasks. There are already examples of digital twins created for mourning families in South Korea utilizing “memory bots” to engage in conversation with AI representations of lost loved ones.

What makes these digital twins remarkable—and somewhat unsettling—is their ability to blur the distinction between human and machine. In contrast to the awkward chatbots of five years past, which struggled to maintain a logical dialogue, modern AI replicas process everything from your LinkedIn updates to your Zoom call recordings to create an eerily precise duplicate. They don’t merely repeat your words; they imitate your uncertainty before difficult inquiries, your distinctive sarcasm in Slack chats, and even your anxious tendency to say “um” when presenting.

The consequences can be astonishing. Imagine a law firm utilizing an AI replica of their top litigator to prepare witnesses—without the lawyer having to cut his Bali vacation short. Or a TikTok influencer finding out that her AI twin had been streaming live to her followers while she was in the hospital. Companies like Eternos.life are assisting terminally ill individuals in creating digital identities that capture their voice, personality, and memories, allowing their families to interact with their digital personas after their passing.

Despite their potential, these digital twins prompt unsettling inquiries: When your AI counterpart decides something, is it genuinely you? Can an algorithm genuinely reflect human decision-making? In a world where anyone can be digitally replicated, what becomes of our essential perception of identity? As we find ourselves at this technological juncture, one fact is evident—the era of digital replicas isn’t approaching. It has arrived, and it is transforming our understanding of humanity in ways we are just starting to comprehend.

How AI Digital Twins Are Built

Creating a convincing AI twin isn’t as simple as feeding a few voice notes into a computer. These digital replicas are built using massive amounts of personal data—everything from speech patterns and writing style to facial expressions and decision-making habits. Companies like Synthesia and DeepBrain AI specialize in crafting these lifelike avatars by training AI models on recorded interviews, social media posts, emails, and even biometric data like vocal tone and emotional inflection.

Once the data is collected, it’s processed by large language models (LLMs) such as GPT-4 or Claude, which learn to mimic the person’s communication style. Advanced neural networks then refine the AI’s responses to make them sound natural, while generative video tools (like OpenAI’s Sora) can animate the digital twin to move and gesture realistically. The end result? An AI clone that can hold conversations, make decisions, and even express emotions—almost indistinguishably from the real person

how can AI Digital Twins be used?

1. Virtual CEOs and Corporate Decision-Makers

An AI-generated CEO named “Ava” to oversee operations. The digital executive analyzes market trends, communicates with investors, and makes data-driven decisions—without ever needing a coffee break. Supporters argue that AI leaders eliminate human bias and fatigue, but critics warn that they lack intuition and emotional intelligence, which could lead to disastrous business moves if left unchecked.

2. AI Therapists and Mental Health Coaches

Mental health apps like Woebot and Replika use AI clones of therapists to provide 24/7 emotional support. These digital counselors use cognitive behavioral therapy (CBT) techniques to help users manage anxiety, depression, and stress. While they offer instant, judgment-free assistance, there’s concern that over-reliance on AI therapy could replace human connection—or worse, give harmful advice if the system malfunctions.

3. Digital Immortality: Bringing Back the Departed

One of the most controversial uses of AI digital twins is recreating deceased loved ones. Startups like Project December allow grieving families to chat with AI versions of relatives who have passed away. For some, it’s a comforting way to preserve memories; for others, it raises ethical questions about consent and the morality of “resurrecting” the dead through technology.

4. AI Influencers and Virtual Celebrities

Instagram’s Lil Miquela, a completely AI-generated influencer, has amassed millions of followers without ever being a real person. Brands love her because she never ages, never gets canceled, and always stays on-message. But as AI celebrities become more common, will they push real human creators out of the spotlight?

5. AI Customer Service and Sales Reps

Companies are now deploying AI clones of their best employees to handle customer interactions. Platforms like DupDub create digital sales reps that mimic real staff in video calls, offering personalized pitches without the need for human involvement. While this cuts costs, it also risks frustrating customers if the AI comes across as too robotic or scripted.

The Ethical Dilemmas: What Could Go Wrong?

As exciting as AI digital twins are, they come with serious risks:

1. Identity Theft and Unauthorized Cloning

What happens if someone creates an AI version of you without your permission? There have already been cases of journalists and celebrities discovering deepfake interviews they never gave. Without strict regulations, anyone could be impersonated for scams, misinformation, or fraud.

2. Emotional Dependence on AI

If people start confiding in AI therapists, dating AI partners, or even “talking” to AI versions of lost loved ones, could it erode real human relationships? Studies show that some users of apps like Replika develop deep emotional attachments to their AI companions—raising concerns about social isolation.

3. Job Displacemen

If AI CEOs, lawyers, and doctors become the norm, what happens to human professionals? Some experts predict that by 2030, 40% of executive tasks could be automated, leaving many highly skilled workers obsolete.

4. Legal Gray Areas

Who’s responsible if an AI CEO makes a bad financial decision? Can an AI clone sign legal documents? What if an AI therapist gives harmful advice? Right now, the law hasn’t caught up with these questions.

The Future: Will We All Have AI Clones?

Looking ahead, AI digital twins are only going to become more advanced—and more common. Within the next decade, we might see:

Personal AI Assistants – Your digital twin handling emails, meetings, and social media while you focus on more meaningful tasks.

Legacy Avatars – People recording AI versions of themselves for future generations to interact with.

Stricter Regulations – Governments implementing “AI identity verification” to prevent misuse

The big question isn’t whether we can create AI clones—it’s whether we should. The technology offers incredible benefits, but without careful oversight, it could lead to privacy violations, emotional harm, and even societal disruption.

Final Thoughts: A Double-Edged Sword

AI digital twins is starting to become a permanent fixture, and they are already transforming how we operate, interact, and even mourn. The crucial aspect will be harmonizing innovation with ethics—making certain that these digital personas benefit humanity instead of taking advantage of it.

So, would you like an AI version of yourself? Or is this a Pandora’s box that we should leave shut? Let’s talk about it.